Overview

LeoFS Storage Overview

This may be skipped

LeoFS is not required for a minimal setup, this steps can be skipped if at the end if the fifo setup instead of calling

sniffle-admin init-leothe s3 host is set tono_s3by executingsniffle-admin config set storage.s3.host no_s3.Please be aware that when not using leo backups won't be functioning, and setting up the correct imgadm sources are your responsibility.

This configuration is purely meant for test setups and while provided is currently consider to be not be supported.

LeoFS is what Project FiFo uses for its object storage. LeoFS is an unstructured object store for the web. It is a highly available, distributed, eventually consistent and scalable storage system. LeoFS supports a huge amount of various kinds of unstructured data and is S3_API compatible. Project FiFo uses LeoFS for storing VM datasets, snapshots and backups.

Project FiFo provides all the required packages needed to get the LeoFS services running within SmartOS Zones. This makes it easy to get your underlying LeoFS storage in place prior to installing FiFo. A fully functional LeoFS storage system is required for Project-FiFo to work.

Overview

This guide will NOT attempt to be a comprehensive document that covers every possible installation scenario. Instead it will cover a basic, best-practice bare-minimum setup that is considered sane for a basic deployment.

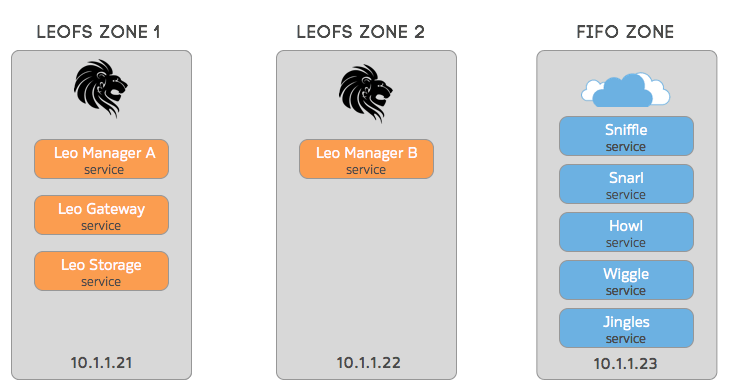

As part of the LeoFS setup, we will be creating 2 dedicated zones. in each zone we will install specific LeoFS packages and configure each service. It is recommended that you get your LeoFS zones up and running first, then continue with the FiFo setup as per the installation manual.

A LeoFS Cluster can be thought of as elastic storage and as such, can stretch and grow as needed. LeoFS consists of 3 services that depend on Erlang.

| LeoFS Gateway | Handles http requests and responses from clients. |

| LeoFS Storage | Handles GET, PUT and DELETE objects as well as metadata. |

| LeoFS Manager | Monitors the LeoFS Gateway and the LeoFS Storage nodes. |

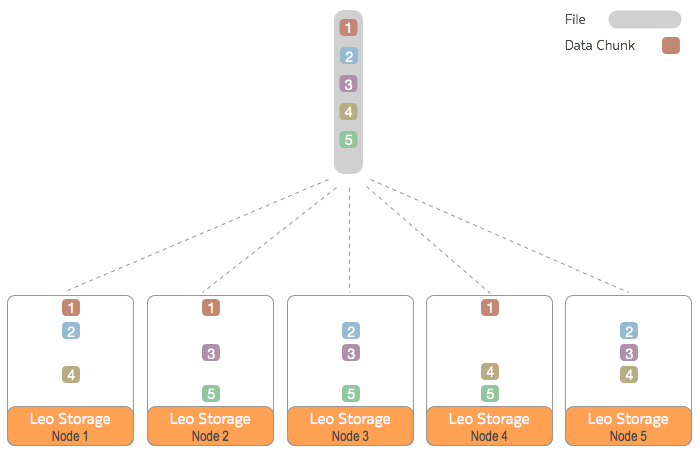

The storage node is where your data physically resides. In production environments it is recommended that you have multiple storage nodes distributed across multiple physical servers. In addition you should set your consistency level and number of Replicas to values that match the high availability levels that you require.

It is very important to understand that it is your configured consistency levels that dictate fault tolerance and high availability and has nothing to do with the the number of storage nodes you have. Once your consistency levels have been defined and your storage cluster started, they CAN NOT be changed - so please design for this accordingly.

To further illustrate this point, in the below example diagram, we have 5 LeoFS storage nodes with the cluster configured for a consistency level of N=3 write replicas. This ensures that 3 copies of all data exists at all times.

Next up

Now that you're familiar with the LeoFS fundamentals, lets move on to installing LeoFS.

Updated less than a minute ago