Overview

Metric Setup Overview

The ability to analyse metrics and historical data is important for professional cloud orchestration. FiFo includes a comprehensive metric gathering mechanism that collects thousands of metrics from a system with virtually no impact to the system itself.

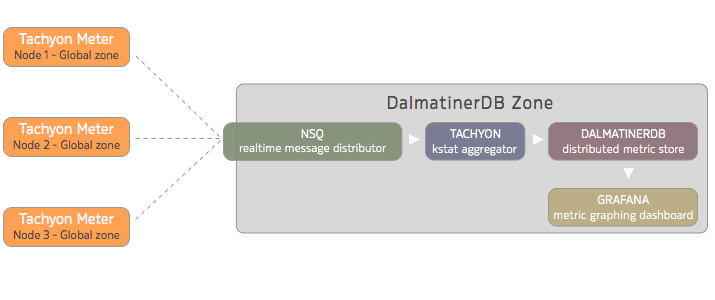

This data is sent from your compute nodes to a realtime distributed messaging service (NSQ) then an aggregation and translator service (Tachyon) prepares the data for optimal storage in the purpose built metric database (DalmatinerDB). These historical metrics are used for the both metric graphs within the Cerberus web UI as well as in the optional metric graphing dashboard (Grafana).

Overview

This guide will NOT attempt to be a comprehensive document that covers every possible installation scenario. Instead it will cover a basic, best-practice bare-minimum setup that is considered sane for a small basic deployment.

| Tachyon Meter | KSTAT gathering and sending service running in the Global Zone. |

| NSQ | A realtime distributed messaging platform. |

| Tachyon Aggregator | KSTAT Aggregating, decoding and translating service. |

| DalmatinerDB | A fast, distributed metric store. |

| Grafana | Dashboard builder for time series metrics with web front-end |

In this guide we will be setting up all the metric related services within a single zone.

In production environments it is recommended that you use separate distributed NSQ processes, preferably 1 per each Physical Server. You should design and configure your services around the number of Replicas of data you require and in accordance with the high availability levels that you require.

You should also set parameters within the config files that align with your data retention policies and the amount of physical storage you have available. The space consumed per metric is ~12bits per second with ZFS compression enabled (LZ4 recommended) this roughly equates to 1MB of disk space used per week per metric with 604800 metric data points stored.

Next up

Now that you're familiar with the FiFo Metric fundamentals, lets move on to the metric setup guide .

Updated less than a minute ago